“免费”容器存储及备份恢复方案

云原生时代为什么还需要本地存储?

云原生时代,对于有存储应用的容器化上云,一般的解决思路是“计算存储分离”,计算层通过容器技术实现弹性伸缩,而相应的存储层也需要配合进行“动态挂载”,要实现动态挂载能力,使用基于网络的存储系统可能是最佳选择。然而,“网络”存储的磁盘IO性能差、自带高可用能力的中间件系统不需要存储层的“动态挂载” 等种种原因,业界对本地存储还是“青睐有加”。因此类似rabbitmq、kafka这样的中间件系统,优先使用本地盘,然后通过k8s增强自动化运维能力,解决原来磁盘手动管理问题,实现动态分配、扩容、隔离。

有没有更适合k8s的备份恢复方案?

传统的数据备份方案,一种是利用存储数据的服务端实现定期快照的备份,另一种是在每台目标服务器上部署专有备份agent并指定备份数据目录,定期把数据远程复制到外部存储上。这两种方式均存在“备份机制固化”、“数据恢复慢”等问题,无法适应容器化后的弹性、池化部署场景。我们需要更贴合k8s容器场景的备份恢复能力,实现一键备份、快速恢复。

目录

整体计划

- 准备一个k8s集群,master节点不跑workload,最好能有2个worker节点;

- 部署carina云原生本地容器存储方案,测试本地盘自动化管理能力

- 部署velero云原生备份方案,测试数据备份和恢复能力

k8s环境

- 版本:v1.19.14

- 集群规模:1master 2worker

- 磁盘挂载情况:除了根目录使用了一块独立盘,初始状态未使用其他的磁盘

部署carina

1、部署脚本

可以参考官方文档,部署方式区分1.22版本前后,大概率由于1.22版本很多API发生变更。

2、本地裸盘准备:

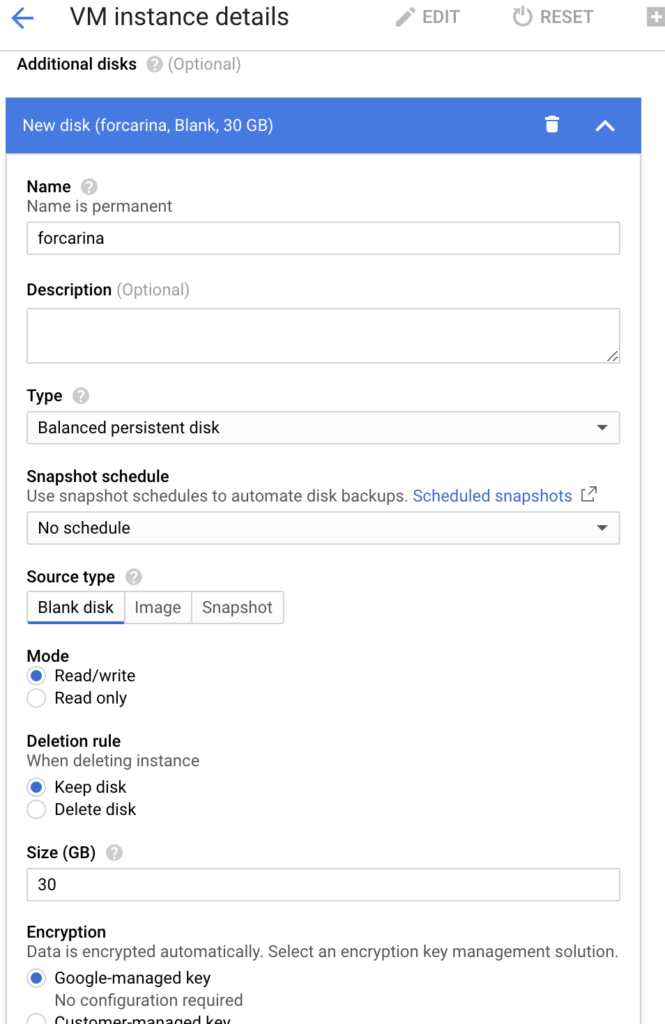

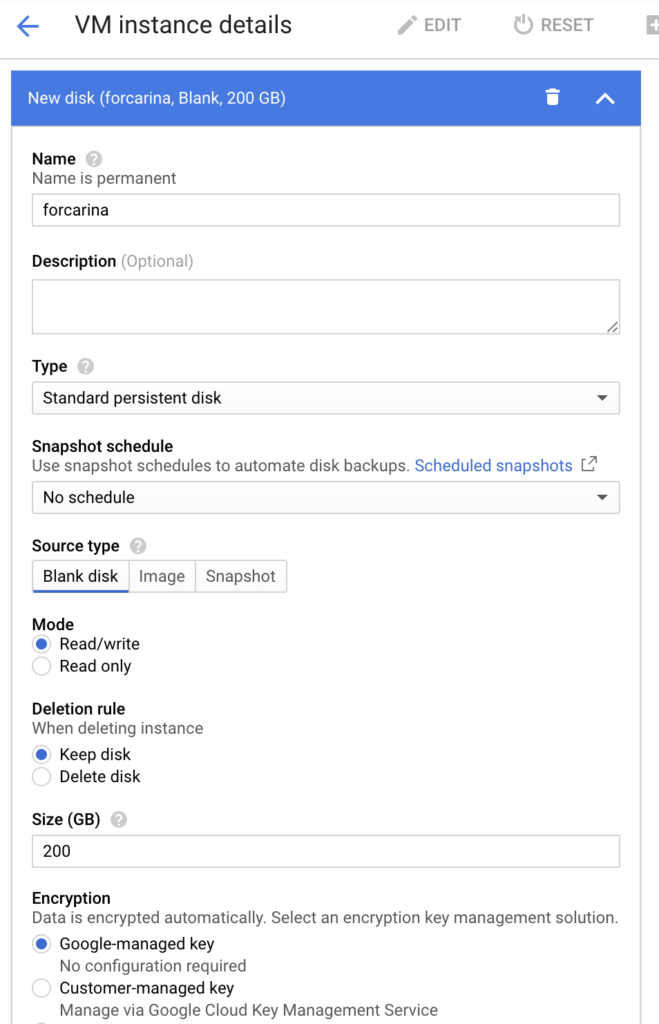

为每个worker挂载一块裸盘,建议至少20G,carina默认会占用10G磁盘空间,作为存储管理元数据。由于我自己使用了谷歌云,可以通过如下步骤实现:第一个是ssd,第二个是hdd。

3、确认carina组件能够搜索并读取到新挂载的裸盘,需要通过修改carina默认扫描本地盘的策略:

# 通过以下命令查看配置扫描策略的configmap

# 官网说明文档:https://github.com/carina-io/carina/blob/main/docs/manual/disk-manager.md

> kubectl describe cm carina-csi-config -n kube-system

Name: carina-csi-config

Namespace: kube-system

Labels: class=carina

Annotations: <none>

Data

====

config.json:

----

{

# 根据google cloud磁盘的命名规则作了更改,匹配以sd开头的磁盘

"diskSelector": ["sd+"],

# 扫描周期180秒

"diskScanInterval": "180",

"diskGroupPolicy": "type",

"schedulerStrategy": "spradout"

}

4、通过查看carina组件状态,确认本地盘已经被识别

> kubectl get node u20-w1 -o template --template={{.status.capacity}}

map[carina.storage.io/carina-vg-hdd:200 carina.storage.io/carina-vg-ssd:20 cpu:2 ephemeral-storage:30308240Ki hugepages-1Gi:0 hugepages-2Mi:0 memory:4022776Ki pods:110]

> kubectl get node u20-w1 -o template --template={{.status.allocatable}}

map[carina.storage.io/carina-vg-hdd:189 carina.storage.io/carina-vg-ssd:1 cpu:2 ephemeral-storage:27932073938 hugepages-1Gi:0 hugepages-2Mi:0 memory:3920376Ki pods:110]

# 可以看到hdd容量已经变成200了、SSD容量变成20,为什么能区分ssd和hdd呢?这里先按下不表

# 这里也能看到预留了10G空间不可使用,因为200G新盘刚加入只有189G(考虑到有磁盘单位换算带来的误差)可用。

# 这些信息很重要,当pv创建时会从该node信息中获取当前节点磁盘容量,然后根据pv调度策略进行调度

# 还有个集中查看磁盘使用情况的入口

> kubectl get configmap carina-node-storage -n kube-system -o json | jq .data.node

[

{

"allocatable.carina.storage.io/carina-vg-hdd":"189",

"allocatable.carina.storage.io/carina-vg-ssd":"1",

"capacity.carina.storage.io/carina-vg-hdd":"200",

"capacity.carina.storage.io/carina-vg-ssd":"20",

"nodeName":"u20-w1"

},

{

"allocatable.carina.storage.io/carina-vg-hdd":"189",

"allocatable.carina.storage.io/carina-vg-ssd":"0",

"capacity.carina.storage.io/carina-vg-hdd":"200",

"capacity.carina.storage.io/carina-vg-ssd":"0",

"nodeName":"u20-w2"

}

]

5、为什么能自动识别hdd和sdd呢?

# carina-node服务启动时会自动将节点上磁盘按照SSD和HDD进行分组并组建成vg卷组

# 使用命令lsblk --output NAME,ROTA查看磁盘类型,ROTA=1为HDD磁盘 ROTA=0为SSD磁盘

# 支持文件存储及块设备存储,其中文件存储支持xfs和ext4格式

# 下面是事前声明的storageclass,用来自动创建pv

> k get sc csi-carina-sc -o json | jq .metadata.annotations

{

"kubectl.kubernetes.io/last-applied-configuration":{

"allowVolumeExpansion":true,

"apiVersion":"storage.k8s.io/v1",

"kind":"StorageClass",

"metadata":{

"annotations":{},

"name":"csi-carina-sc"

},

"mountOptions":[

"rw"

],

"parameters":{

"csi.storage.k8s.io/fstype":"ext4"

},

"provisioner":"carina.storage.io",

"reclaimPolicy":"Delete",

"volumeBindingMode":"WaitForFirstConsumer"

}

}

测试carina自动分配PV能力

1、想要carina具备自动创建PV能力,需要先声明并创建storageclass。

# 创建storageclass的yaml --- apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: csi-carina-sc provisioner: carina.storage.io # 这是该CSI驱动的名称,不允许更改 parameters: # 支持xfs,ext4两种文件格式,如果不填则默认ext4 csi.storage.k8s.io/fstype: ext4 # 这是选择磁盘分组,该项目会自动将SSD及HDD磁盘分组 # SSD:ssd HDD: hdd # 如果不填会随机选择磁盘类型 #carina.storage.io/disk-type: hdd reclaimPolicy: Delete allowVolumeExpansion: true # 支持扩容,定为true便可 # WaitForFirstConsumer表示被容器绑定调度后再创建pv volumeBindingMode: WaitForFirstConsumer # 支持挂载参数设置,这里配置为读写模式 mountOptions: - rw

kubectl apply后,可以通过以下命令确认:

> kubectl get sc csi-carina-sc -o json | jq .metadata.annotations

{

"kubectl.kubernetes.io/last-applied-configuration":{

"allowVolumeExpansion":true,

"apiVersion":"storage.k8s.io/v1",

"kind":"StorageClass",

"metadata":{

"annotations":{},

"name":"csi-carina-sc"

},

"mountOptions":[

"rw"

],

"parameters":{

"csi.storage.k8s.io/fstype":"ext4"

},

"provisioner":"carina.storage.io",

"reclaimPolicy":"Delete",

"volumeBindingMode":"WaitForFirstConsumer"

}

}

2、部署带存储的测试应用

测试场景比较简单,使用简单nginx服务,挂载数据盘,存放自定义html页面。

# pvc for nginx html

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: csi-carina-pvc-big

namespace: default

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

# 指定carina的storageclass名称

storageClassName: csi-carina-sc

volumeMode: Filesystem

# nginx deployment yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: carina-deployment-big

namespace: default

labels:

app: web-server-big

spec:

replicas: 1

selector:

matchLabels:

app: web-server-big

template:

metadata:

labels:

app: web-server-big

spec:

containers:

- name: web-server

image: nginx:latest

imagePullPolicy: "IfNotPresent"

volumeMounts:

- name: mypvc-big

mountPath: /usr/share/nginx/html # nginx默认将页面内容存放在这个文件夹

volumes:

- name: mypvc-big

persistentVolumeClaim:

claimName: csi-carina-pvc-big

readOnly: false

查看测试应用运行情况:

# pvc

> kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

csi-carina-pvc-big Bound pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 10Gi RWO csi-carina-sc 109s

# pv

> kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 10Gi RWO Delete Bound default/csi-carina-pvc-big csi-carina-sc 109s

# nginx pod

> kubectl get po -l app=web-server-big -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

carina-deployment-big-6b78fb9fd-mwf8g 1/1 Running 0 3m48s 10.0.2.69 u20-w2 <none> <none>

# 查看相关node的磁盘使用情况,可分配的大小已经发生变化,缩小了10

> kubectl get node u20-w2 -o template --template={{.status.allocatable}}

map[carina.storage.io/carina-vg-hdd:179 carina.storage.io/carina-vg-ssd:0 cpu:2 ephemeral-storage:27932073938 hugepages-1Gi:0 hugepages-2Mi:0 memory:3925000Ki pods:110]

登录运行测试服务的node节点,查看磁盘挂载情况:

# 磁盘分配情况如下,有meta和pool两个lvm

> lsblk

...

sdb 8:16 0 200G 0 disk

├─carina--vg--hdd-thin--pvc--74e683f9--d2a4--40a0--95db--85d1504fd961_tmeta

│ 253:0 0 12M 0 lvm

│ └─carina--vg--hdd-thin--pvc--74e683f9--d2a4--40a0--95db--85d1504fd961-tpool

│ 253:2 0 10G 0 lvm

│ ├─carina--vg--hdd-thin--pvc--74e683f9--d2a4--40a0--95db--85d1504fd961

│ │ 253:3 0 10G 1 lvm

│ └─carina--vg--hdd-volume--pvc--74e683f9--d2a4--40a0--95db--85d1504fd961

│ 253:4 0 10G 0 lvm /var/lib/kubelet/pods/57ded9fb-4c82-4668-b77b-7dc02ba05fc2/volumes/kubernetes.io~

└─carina--vg--hdd-thin--pvc--74e683f9--d2a4--40a0--95db--85d1504fd961_tdata

253:1 0 10G 0 lvm

└─carina--vg--hdd-thin--pvc--74e683f9--d2a4--40a0--95db--85d1504fd961-tpool

253:2 0 10G 0 lvm

├─carina--vg--hdd-thin--pvc--74e683f9--d2a4--40a0--95db--85d1504fd961

│ 253:3 0 10G 1 lvm

└─carina--vg--hdd-volume--pvc--74e683f9--d2a4--40a0--95db--85d1504fd961

253:4 0 10G 0 lvm /var/lib/kubelet/pods/57ded9fb-4c82-4668-b77b-7dc02ba05fc2/volumes/kubernetes.io~

# vgs

> vgs

VG #PV #LV #SN Attr VSize VFree

carina-vg-hdd 1 2 0 wz--n- <200.00g 189.97g

# lvs

> lvs

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

thin-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 carina-vg-hdd twi-aotz-- 10.00g 2.86 11.85

volume-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 carina-vg-hdd Vwi-aotz-- 10.00g thin-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 2.86

# 使用lvm命令行工具查看磁盘挂载信息

> pvs

PV VG Fmt Attr PSize PFree

/dev/sdb carina-vg-hdd lvm2 a-- <200.00g 189.97g

> pvdisplay

--- Physical volume ---

PV Name /dev/sdb

VG Name carina-vg-hdd

PV Size <200.00 GiB / not usable 3.00 MiB

Allocatable yes

PE Size 4.00 MiB

Total PE 51199

Free PE 48633

Allocated PE 2566

PV UUID Wl6ula-kD54-Mj5H-ZiBc-aHPB-6RHI-mXs9R9

> lvs

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

thin-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 carina-vg-hdd twi-aotz-- 10.00g 2.86 11.85

volume-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 carina-vg-hdd Vwi-aotz-- 10.00g thin-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 2.86

> lvdisplay

--- Logical volume ---

LV Name thin-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961

VG Name carina-vg-hdd

LV UUID kB7DFm-dl3y-lmop-p7os-3EW6-4Toy-slX7qn

LV Write Access read/write (activated read only)

LV Creation host, time u20-w2, 2021-11-09 05:31:18 +0000

LV Pool metadata thin-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961_tmeta

LV Pool data thin-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961_tdata

LV Status available

# open 2

LV Size 10.00 GiB

Allocated pool data 2.86%

Allocated metadata 11.85%

Current LE 2560

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:2

--- Logical volume ---

LV Path /dev/carina-vg-hdd/volume-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961

LV Name volume-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961

VG Name carina-vg-hdd

LV UUID vhDYe9-KzPc-qqJk-2o1f-TlCv-0TDL-643b8r

LV Write Access read/write

LV Creation host, time u20-w2, 2021-11-09 05:31:19 +0000

LV Pool name thin-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961

LV Status available

# open 1

LV Size 10.00 GiB

Mapped size 2.86%

Current LE 2560

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:4

进入磁盘,添加自定义内容,查看存储情况

# 进入容器内,创建自定义页面 > kubectl exec -ti carina-deployment-big-6b78fb9fd-mwf8g -- /bin/bash /# cd /usr/share/nginx/html/ /usr/share/nginx/html# ls lost+found /usr/share/nginx/html# echo "hello carina" > index.html /usr/share/nginx/html# curl localhost hello carina /usr/share/nginx/html# echo "test carina" > test.html /usr/share/nginx/html# curl localhost/test.html test carina # 登录node节点,进入挂载点,查看上面刚刚创建的内容 > df -h ... /dev/carina/volume-pvc-74e683f9-d2a4-40a0-95db-85d1504fd961 9.8G 37M 9.7G 1% /var/lib/kubelet/pods/57ded9fb-4c82-4668-b77b-7dc02ba05fc2/volumes/kubernetes.io~csi/pvc-74e683f9-d2a4-40a0-95db-85d1504fd961/mount > cd /var/lib/kubelet/pods/57ded9fb-4c82-4668-b77b-7dc02ba05fc2/volumes/kubernetes.io~csi/pvc-74e683f9-d2a4-40a0-95db-85d1504fd961/mount > ll total 32 drwxrwsrwx 3 root root 4096 Nov 9 05:54 ./ drwxr-x--- 3 root root 4096 Nov 9 05:31 ../ -rw-r--r-- 1 root root 13 Nov 9 05:54 index.html drwx------ 2 root root 16384 Nov 9 05:31 lost+found/ -rw-r--r-- 1 root root 12 Nov 9 05:54 test.html

部署velero

1、下载velero命令行工具,本次使用的是1.7.0版本

> wget <https://github.com/vmware-tanzu/velero/releases/download/v1.7.0/velero-v1.7.0-linux-amd64.tar.gz> > tar -xzvf velero-v1.7.0-linux-amd64.tar.gz > cd velero-v1.7.0-linux-amd64 && cp velero /usr/local/bin/ > velero Velero is a tool for managing disaster recovery, specifically for Kubernetes cluster resources. It provides a simple, configurable, and operationally robust way to back up your application state and associated data. If you're familiar with kubectl, Velero supports a similar model, allowing you to execute commands such as 'velero get backup' and 'velero create schedule'. The same operations can also be performed as 'velero backup get' and 'velero schedule create'. Usage: velero [command] Available Commands: backup Work with backups backup-location Work with backup storage locations bug Report a Velero bug client Velero client related commands completion Generate completion script create Create velero resources debug Generate debug bundle delete Delete velero resources describe Describe velero resources get Get velero resources help Help about any command install Install Velero plugin Work with plugins restic Work with restic restore Work with restores schedule Work with schedules snapshot-location Work with snapshot locations uninstall Uninstall Velero version Print the velero version and associated image

2、部署minio对象存储,作为velero后端存储

为了部署velero服务端,需要优先准备好一个后端存储。velero支持很多类型的后端存储,详细看这里:https://velero.io/docs/v1.7/supported-providers/。只要遵循AWS S3存储接口规范的,都可以对接,本次使用兼容S3接口的minio服务作为后端存储,部署minio方式如下,其中就使用到了carina storageclass提供磁盘创建能力:

# 参考文档:https://velero.io/docs/v1.7/contributions/minio/

# 统一部署在minio命名空间

---

apiVersion: v1

kind: Namespace

metadata:

name: minio

# 为minio后端存储申请8G磁盘空间,走的就是carina storageclass

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: minio-storage-pvc

namespace: minio

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 8Gi

storageClassName: csi-carina-sc # 指定carina storageclass

volumeMode: Filesystem

---

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: minio

name: minio

labels:

component: minio

spec:

strategy:

type: Recreate

selector:

matchLabels:

component: minio

template:

metadata:

labels:

component: minio

spec:

volumes:

- name: storage

persistentVolumeClaim:

claimName: minio-storage-pvc

readOnly: false

- name: config

emptyDir: {}

containers:

- name: minio

image: minio/minio:latest

imagePullPolicy: IfNotPresent

args:

- server

- /storage

- --config-dir=/config

- --console-address ":9001" # 配置前端页面的暴露端口

env:

- name: MINIO_ACCESS_KEY

value: "minio"

- name: MINIO_SECRET_KEY

value: "minio123"

ports:

- containerPort: 9000

- containerPort: 9001

volumeMounts:

- name: storage

mountPath: "/storage"

- name: config

mountPath: "/config"

# 使用nodeport创建svc,提供对外服务能力,包括前端页面和后端API

---

apiVersion: v1

kind: Service

metadata:

namespace: minio

name: minio

labels:

component: minio

spec:

# ClusterIP is recommended for production environments.

# Change to NodePort if needed per documentation,

# but only if you run Minio in a test/trial environment, for example with Minikube.

type: NodePort

ports:

- name: console

port: 9001

targetPort: 9001

- name: api

port: 9000

targetPort: 9000

protocol: TCP

selector:

component: minio

# 初始化创建velero的bucket

---

apiVersion: batch/v1

kind: Job

metadata:

namespace: minio

name: minio-setup

labels:

component: minio

spec:

template:

metadata:

name: minio-setup

spec:

restartPolicy: OnFailure

volumes:

- name: config

emptyDir: {}

containers:

- name: mc

image: minio/mc:latest

imagePullPolicy: IfNotPresent

command:

- /bin/sh

- -c

- "mc --config-dir=/config config host add velero <http://minio:9000> minio minio123 && mc --config-dir=/config mb -p velero/velero"

volumeMounts:

- name: config

mountPath: "/config"

部署minio完成后如下所示:

> kubectl get all -n minio NAME READY STATUS RESTARTS AGE pod/minio-686755b769-k6625 1/1 Running 0 6d15h NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/minio NodePort 10.98.252.130 <none> 36369:31943/TCP,9000:30436/TCP 6d15h NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/minio 1/1 1 1 6d15h NAME DESIRED CURRENT READY AGE replicaset.apps/minio-686755b769 1 1 1 6d15h replicaset.apps/minio-c9c844f67 0 0 0 6d15h NAME COMPLETIONS DURATION AGE job.batch/minio-setup 1/1 114s 6d15h

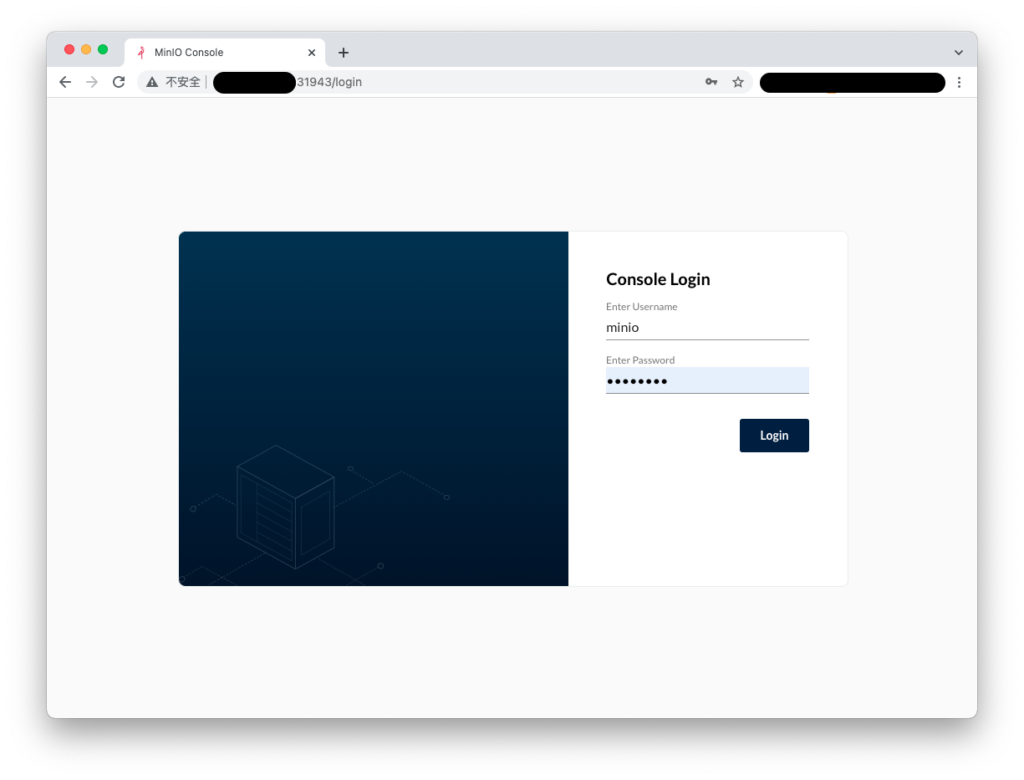

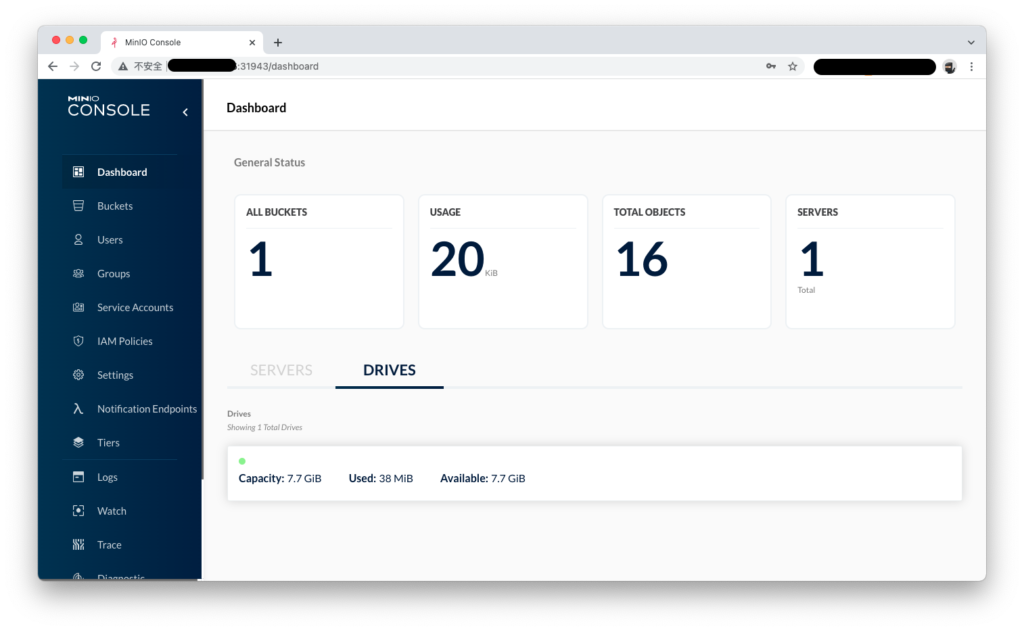

打开minio页面,使用部署时定义的账号登录:

3、安装velero服务端

# 注意添加--use-restric,开启pv数据备份

# 注意最后一行,使用了k8s svc域名服务,<http://minio.minio.svc:9000>

velero install

--provider aws

--plugins velero/velero-plugin-for-aws:v1.2.1

--bucket velero

--secret-file ./minio-cred

--namespace velero

--use-restic

--use-volume-snapshots=false

--backup-location-config region=minio,s3ForcePathStyle="true",s3Url=http://minio.minio.svc:9000

安装完成后如下所示:

# 除了velero之外, # 还有restic,它是负责备份pv数据的核心组件,需要保证每个节点上的pv都能备份,因此它使用了daemonset模式 # 详细参考:<https://velero.io/docs/v1.7/restic/> > kubectl get all -n velero NAME READY STATUS RESTARTS AGE pod/restic-g5q5k 1/1 Running 0 6d15h pod/restic-jdk7h 1/1 Running 0 6d15h pod/restic-jr8f7 1/1 Running 0 5d22h pod/velero-6979cbd56b-s7v99 1/1 Running 0 5d21h NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/restic 3 3 3 3 3 <none> 6d15h NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/velero 1/1 1 1 6d15h NAME DESIRED CURRENT READY AGE replicaset.apps/velero-6979cbd56b 1 1 1 6d15h

使用velero备份应用及其数据

- 使用velero backup命令备份带pv的测试应用

# 通过--selector选项指定应用标签

# 通过--default-volumes-to-restic选项显式声明使用restric备份pv数据

> velero backup create nginx-backup --selector app=web-server-big --default-volumes-to-restic

I1109 09:14:31.380431 1527737 request.go:655] Throttling request took 1.158837643s, request: GET:<https://10.140.0.8:6443/apis/networking.istio.io/v1beta1?timeout=32s>

Backup request "nginx-pv-backup" submitted successfully.

Run `velero backup describe nginx-pv-backup` or `velero backup logs nginx-pv-backup` for more details.

# 无法查看执行备份的日志,说是无法访问minio接口,很奇怪

> velero backup logs nginx-pv-backup

I1109 09:15:04.840199 1527872 request.go:655] Throttling request took 1.146201139s, request: GET:<https://10.140.0.8:6443/apis/networking.k8s.io/v1beta1?timeout=32s>

An error occurred: Get "<http://minio.minio.svc:9000/velero/backups/nginx-pv-backup/nginx-pv-backup-logs.gz?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=minio%2F20211109%2Fminio%2Fs3%2Faws4_request&X-Amz-Date=20211109T091506Z&X-Amz-Expires=600&X-Amz-SignedHeaders=host&X-Amz-Signature=b48c1988101b544329effb67aee6a7f83844c4630eb1d19db30f052e3603b9b2>": dial tcp: lookup minio.minio.svc on 127.0.0.53:53: no such host

# 可以看到详细的备份执行信息,

# 这个备份是会过期的,默认是1个月有效期

> velero backup describe nginx-pv-backup

I1109 09:15:25.834349 1527945 request.go:655] Throttling request took 1.147122392s, request: GET:<https://10.140.0.8:6443/apis/pkg.crossplane.io/v1beta1?timeout=32s>

Name: nginx-pv-backup

Namespace: velero

Labels: velero.io/storage-location=default

Annotations: velero.io/source-cluster-k8s-gitversion=v1.19.14

velero.io/source-cluster-k8s-major-version=1

velero.io/source-cluster-k8s-minor-version=19

Phase: Completed

Errors: 0

Warnings: 1

Namespaces:

Included: *

Excluded: <none>

Resources:

Included: *

Excluded: <none>

Cluster-scoped: auto

Label selector: app=web-server-big

Storage Location: default

Velero-Native Snapshot PVs: auto

TTL: 720h0m0s

Hooks: <none>

Backup Format Version: 1.1.0

Started: 2021-11-09 09:14:32 +0000 UTC

Completed: 2021-11-09 09:15:05 +0000 UTC

Expiration: 2021-12-09 09:14:32 +0000 UTC

Total items to be backed up: 9

Items backed up: 9

Velero-Native Snapshots: <none included>

Restic Backups (specify --details for more information):

Completed: 1

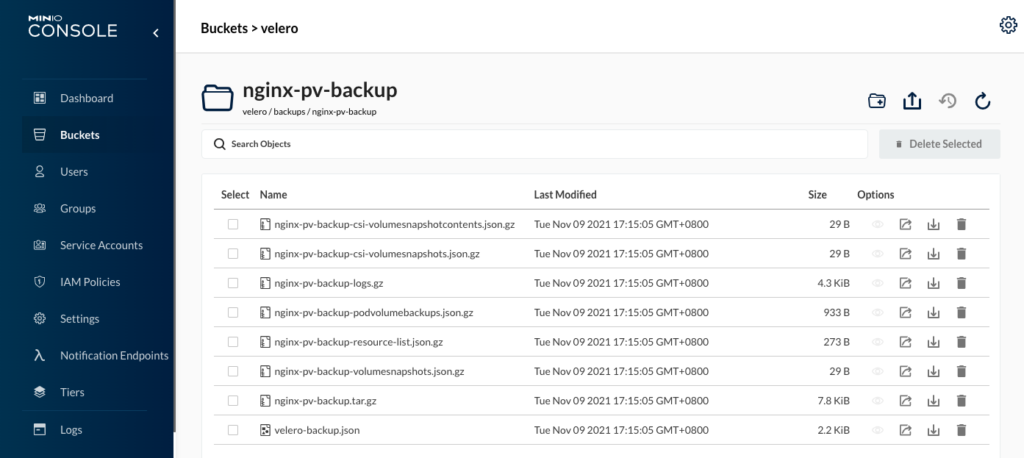

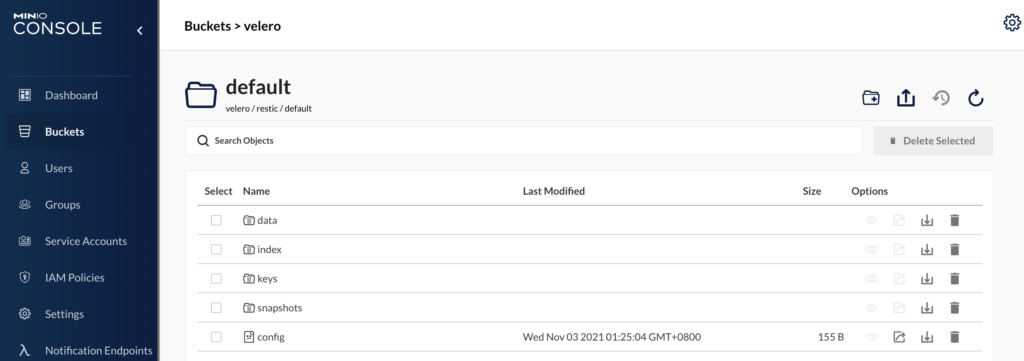

2、打开minio页面,查看备份数据的明细

在backups文件夹下有个nginx-pv-backup文件夹,里面有很多压缩文件,有机会可以分析下。

在restric文件夹下,产生了一堆数据,查了资料,它是加密保存的,因此无法显性看出备份pv的数据。接下来我们尝试下恢复能力,就能验证其数据备份能力。

使用velero恢复应用及其数据

1、删除测试应用及其数据

# delete nginx deployment > kubectl delete deploy carina-deployment-big # delete nginx pvc # 检查pv是否已经释放 > kubectl delete pvc csi-carina-pvc-big

2、通过velero restore恢复测试应用和数据

# 恢复 > velero restore create --from-backup nginx-pv-backup Restore request "nginx-pv-backup-20211109094323" submitted successfully. Run `velero restore describe nginx-pv-backup-20211109094323` or `velero restore logs nginx-pv-backup-20211109094323` for more details. root@u20-m1:/home/legendarilylwq# velero restore describe nginx-pv-backup-20211109094323 I1109 09:43:50.028122 1534182 request.go:655] Throttling request took 1.161022334s, request: GET:<https://10.140.0.8:6443/apis/networking.k8s.io/v1?timeout=32s> Name: nginx-pv-backup-20211109094323 Namespace: velero Labels: <none> Annotations: <none> Phase: InProgress Estimated total items to be restored: 9 Items restored so far: 9 Started: 2021-11-09 09:43:23 +0000 UTC Completed: <n/a> Backup: nginx-pv-backup Namespaces: Included: all namespaces found in the backup Excluded: <none> Resources: Included: * Excluded: nodes, events, events.events.k8s.io, backups.velero.io, restores.velero.io, resticrepositories.velero.io Cluster-scoped: auto Namespace mappings: <none> Label selector: <none> Restore PVs: auto Restic Restores (specify --details for more information): New: 1 Preserve Service NodePorts: auto > velero restore get NAME BACKUP STATUS STARTED COMPLETED ERRORS WARNINGS CREATED SELECTOR nginx-pv-backup-20211109094323 nginx-pv-backup Completed 2021-11-09 09:43:23 +0000 UTC 2021-11-09 09:44:14 +0000 UTC 0 2 2021-11-09 09:43:23 +0000 UTC <none>

3、验证测试应用和数据是否恢复

# 查看po、pvc、pv是否自动恢复创建 > kubectl get po NAME READY STATUS RESTARTS AGE carina-deployment-big-6b78fb9fd-mwf8g 1/1 Running 0 93s kubewatch-5ffdb99f79-87qbx 2/2 Running 0 19d static-pod-u20-w1 1/1 Running 15 235d > kubectl get pvc NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE csi-carina-pvc-big Bound pvc-e81017c5-0845-4bb1-8483-a31666ad3435 10Gi RWO csi-carina-sc 100s > kubectl get pv NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE pvc-a07cac5e-c38b-454d-a004-61bf76be6516 8Gi RWO Delete Bound minio/minio-storage-pvc csi-carina-sc 6d17h pvc-e81017c5-0845-4bb1-8483-a31666ad3435 10Gi RWO Delete Bound default/csi-carina-pvc-big csi-carina-sc 103s > kubectl get deploy NAME READY UP-TO-DATE AVAILABLE AGE carina-deployment-big 1/1 1 1 2m20s kubewatch 1/1 1 1 327d # 进入容器,验证自定义页面是否还在 > kubectl exec -ti carina-deployment-big-6b78fb9fd-mwf8g -- /bin/bash /# cd /usr/share/nginx/html/ /usr/share/nginx/html# ls -l total 24 -rw-r--r-- 1 root root 13 Nov 9 05:54 index.html drwx------ 2 root root 16384 Nov 9 05:31 lost+found -rw-r--r-- 1 root root 12 Nov 9 05:54 test.html /usr/share/nginx/html# curl localhost hello carina /usr/share/nginx/html# curl localhost/test.html test carina

4、仔细分析恢复过程

下面是恢复后的测试应用的详细信息,有个新增的init container,名叫restric-wait,它自身使用了一个磁盘挂载。

k describe po carina-deployment-big-6b78fb9fd-mwf8g

Name: carina-deployment-big-6b78fb9fd-mwf8g

Namespace: default

Priority: 0

Node: u20-w2/10.140.0.13

Start Time: Tue, 09 Nov 2021 09:43:47 +0000

Labels: app=web-server-big

pod-template-hash=6b78fb9fd

velero.io/backup-name=nginx-pv-backup

velero.io/restore-name=nginx-pv-backup-20211109094323

Annotations: <none>

Status: Running

IP: 10.0.2.227

IPs:

IP: 10.0.2.227

Controlled By: ReplicaSet/carina-deployment-big-6b78fb9fd

Init Containers:

restic-wait:

Container ID: containerd://ec0ecdf409cc60790fe160d4fc3ba0639bbb1962840622dc20dcc6ccb10e9b5a

Image: velero/velero-restic-restore-helper:v1.7.0

Image ID: docker.io/velero/velero-restic-restore-helper@sha256:6fce885ce23cf15b595b5d3b034d02a6180085524361a15d3486cfda8022fa03

Port: <none>

Host Port: <none>

Command:

/velero-restic-restore-helper

Args:

f4ddbfca-e3b4-4104-b36e-626d29e99334

State: Terminated

Reason: Completed

Exit Code: 0

Started: Tue, 09 Nov 2021 09:44:12 +0000

Finished: Tue, 09 Nov 2021 09:44:14 +0000

Ready: True

Restart Count: 0

Limits:

cpu: 100m

memory: 128Mi

Requests:

cpu: 100m

memory: 128Mi

Environment:

POD_NAMESPACE: default (v1:metadata.namespace)

POD_NAME: carina-deployment-big-6b78fb9fd-mwf8g (v1:metadata.name)

Mounts:

/restores/mypvc-big from mypvc-big (rw)

/var/run/secrets/kubernetes.io/serviceaccount from default-token-kw7rf (ro)

Containers:

web-server:

Container ID: containerd://f3f49079dcd97ac8f65a92f1c42edf38967a61762b665a5961b4cb6e60d13a24

Image: nginx:latest

Image ID: docker.io/library/nginx@sha256:644a70516a26004c97d0d85c7fe1d0c3a67ea8ab7ddf4aff193d9f301670cf36

Port: <none>

Host Port: <none>

State: Running

Started: Tue, 09 Nov 2021 09:44:14 +0000

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/usr/share/nginx/html from mypvc-big (rw)

/var/run/secrets/kubernetes.io/serviceaccount from default-token-kw7rf (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

mypvc-big:

Type: PersistentVolumeClaim (a reference to a PersistentVolumeClaim in the same namespace)

ClaimName: csi-carina-pvc-big

ReadOnly: false

default-token-kw7rf:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-kw7rf

Optional: false

QoS Class: Burstable

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 7m20s carina-scheduler pod 6b8cf52c-7440-45be-a117-8f29d1a37f2c is in the cache, so can't be assumed

Warning FailedScheduling 7m20s carina-scheduler pod 6b8cf52c-7440-45be-a117-8f29d1a37f2c is in the cache, so can't be assumed

Normal Scheduled 7m18s carina-scheduler Successfully assigned default/carina-deployment-big-6b78fb9fd-mwf8g to u20-w2

Normal SuccessfulAttachVolume 7m18s attachdetach-controller AttachVolume.Attach succeeded for volume "pvc-e81017c5-0845-4bb1-8483-a31666ad3435"

Normal Pulling 6m59s kubelet Pulling image "velero/velero-restic-restore-helper:v1.7.0"

Normal Pulled 6m54s kubelet Successfully pulled image "velero/velero-restic-restore-helper:v1.7.0" in 5.580228382s

Normal Created 6m54s kubelet Created container restic-wait

Normal Started 6m53s kubelet Started container restic-wait

Normal Pulled 6m51s kubelet Container image "nginx:latest" already present on machine

Normal Created 6m51s kubelet Created container web-server

Normal Started 6m51s kubelet Started container web-server

感兴趣希望深入研究整体恢复应用的过程,可以参考官网链接:https://velero.io/docs/v1.7/restic/#customize-restore-helper-container,代码实现看这里:https://github.com/vmware-tanzu/velero/blob/main/pkg/restore/restic_restore_action.go

以上通过step by step演示了如何使用carina和velero实现容器存储自动化管理和数据的快速备份和恢复,这次虽然只演示了部分功能(还有如磁盘读写限速、按需备份恢复等高级功能等待大家去尝试),但从结果可以看出,已实现了有存储应用的快速部署、备份和恢复,给k8s集群异地灾备方案带来了“简单”的选择。我自己也准备把Wordpress博客也用这个方式备份起来。

参考链接

- https://mp.weixin.qq.com/s/VC6kVfcBCUQfG6RwM6F1QA 使用 Velero 备份还原 Kubernetes 集群